Competitor prices shift constantly. Amazon sellers reprice inventory every fifteen minutes during peak seasons. Airlines adjust fares multiple times per day. SaaS platforms quietly charge different rates depending on where you browse from. If you're running an e-commerce business or a market research team, keeping up with these changes manually is not realistic—and automated scripts without proper proxy infrastructure will get blocked within hours.

Why Residential Proxies—Not Datacenter—for Price Monitoring

Price monitoring requires collecting data from the same sites repeatedly, often multiple times per day. That repetitive access pattern frequently results in IP-level rate limits or request blocks. For a datacenter vs residential proxies comparison in this context, the core difference comes down to IP trust profile.

Datacenter proxies are fast and cheap, but e-commerce platforms like Amazon, Walmart, and Target flag their IP ranges aggressively. These IPs originate from hosting providers, not ISPs, and major retailers detect them within minutes.

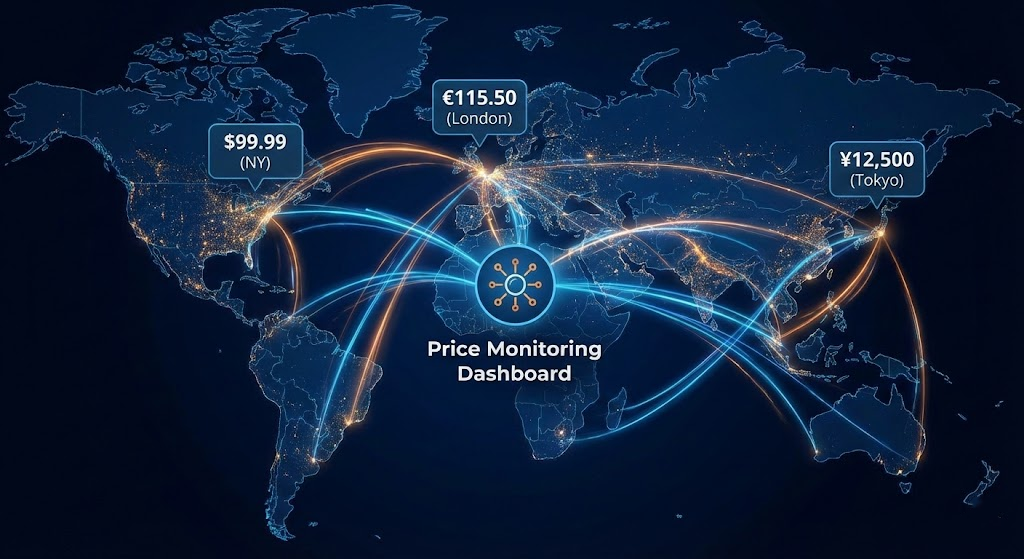

Residential proxies route traffic through IPs assigned by ISPs to real households. Because these are standard consumer IP addresses, requests sent through them carry the same trust profile as regular internet traffic. This matters specifically for price monitoring because many retailers serve different prices based on the visitor's location. A residential IP from Dallas retrieves the same pricing a Dallas-based customer would see, including regional promotions and geo-targeted discounts. A 2025 FTC study on surveillance pricing confirmed that retailers widely use geographic location data to adjust prices at the individual level.

Static residential (ISP) proxies maintain the same IP for extended periods while retaining the trust profile of a residential address. These suit monitoring tasks requiring session persistence, such as tracking prices through multi-page checkout flows.

For most price monitoring, rotating residential proxies are the primary tool. They assign a different IP per request, distributing your traffic across thousands of addresses and keeping each IP's request count below rate-limit thresholds.

Prerequisites

Before writing any code, set up the following:

Environment:

Python 3.8+ installed (3.10+ recommended)

pip packages:

requests,beautifulsoup4,lxml,pandas(install viapip install requests beautifulsoup4 lxml pandas)

Proxy subscription: You need a residential proxy provider with geo-targeting support and rotating IP capability. When evaluating best proxy providers for price monitoring, prioritize these criteria:

IP pool size: Pools under 10 million IPs will struggle with large-scale daily monitoring. Look for providers adding fresh IPs regularly.

Geo-targeting granularity: Country-level is standard; city-level matters if you need localized price comparisons.

Rotation options: Per-request rotation for monitoring, plus sticky sessions for checkout-flow tracking.

Bandwidth pricing: Price monitoring generates moderate bandwidth per page (typically 1–5 MB). Per-GB pricing is usually more cost-effective than per-IP models for this use case.

Protocol support: HTTP, HTTPS, and SOCKS5 compatibility with Python

requests, Scrapy, Puppeteer, and Selenium.

From your provider's dashboard, collect: the proxy gateway hostname, port, and your authentication credentials (username/password or IP whitelist).

Target URLs: A list of product pages you want to monitor. Start small—5 to 10 products—before scaling.

Legal check: Before scraping any site, review https://targetsite.com/robots.txt and the site's Terms of Service. If automated access to pricing pages is disallowed, do not proceed with that target. More on compliance at the end of this guide.

Step-by-Step: Building a Price Monitoring Script with Residential Proxies

Step 1 — Configure Proxy Authentication

Your script connects to a single gateway hostname provided by your proxy service; the provider handles IP rotation on the backend.

import requests

# Retrieve these from your proxy provider's dashboard

PROXY_HOST = "your-provider-gateway.com" # e.g., gate.example.com

PROXY_PORT = "PORT_NUMBER" # e.g., 10001

PROXY_USER = "your_username"

PROXY_PASS = "your_password"

proxy_url = f"http://{PROXY_USER}:{PROXY_PASS}@{PROXY_HOST}:{PROXY_PORT}"

proxies = {

"http": proxy_url,

"https": proxy_url,

}

# Test connectivity

response = requests.get("https://httpbin.org/ip", proxies=proxies, timeout=15)

print(response.json()) # Should show a residential IP, not your real IPIf the test returns a JSON object with an IP address different from your own, the proxy connection is working. If it times out or returns your real IP, double-check the gateway hostname and credentials in your provider's dashboard.

Step 2 — Add Geo-Targeting Parameters

To collect location-specific pricing, configure your proxy requests to exit through IPs in specific regions. Most providers support geo-targeting through username parameters (e.g., appending -country-us or -city-london to your username). The exact syntax varies by provider—check your dashboard documentation for the correct format.

def get_geo_proxy(country="us", city=None):

"""

Build proxy URL with geographic targeting.

Adjust the username format to match your provider's syntax.

Common patterns:

- "user-country-us" (Proxy001, Bright Data style)

- "user-cc-us" (Oxylabs style)

- "user.country.us.city.nyc" (dot-separated style)

"""

geo_user = f"{PROXY_USER}-country-{country}"

if city:

geo_user += f"-city-{city}"

proxy_url = f"http://{geo_user}:{PROXY_PASS}@{PROXY_HOST}:{PROXY_PORT}"

return {"http": proxy_url, "https": proxy_url}

# Example: collect prices as seen from New York, London, and Tokyo

locations = [

{"country": "us", "city": "new_york"},

{"country": "gb", "city": "london"},

{"country": "jp", "city": "tokyo"},

]Step 3 — Scrape Product Prices with Error Handling

The scraper below handles retries on failures, rate-limit backoff, and price extraction with CSS selectors:

from bs4 import BeautifulSoup

import time

import random

HEADERS = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) "

"AppleWebKit/537.36 (KHTML, like Gecko) "

"Chrome/120.0.0.0 Safari/537.36",

"Accept-Language": "en-US,en;q=0.9",

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

}

def scrape_price(url, proxies, max_retries=3):

"""Fetch a product page and extract the price."""

for attempt in range(max_retries):

try:

response = requests.get(

url,

proxies=proxies,

headers=HEADERS,

timeout=20,

)

if response.status_code == 200:

soup = BeautifulSoup(response.text, "lxml")

# --- Finding the right selector for your target site ---

# 1. Open the product page in Chrome/Firefox

# 2. Right-click the price element → Inspect

# 3. In DevTools, right-click the highlighted HTML →

# Copy → Copy selector

# 4. Replace the selector string below with your result

#

# Common price selectors on major sites:

# Amazon: ".a-price .a-offscreen"

# Shopify: ".price__regular .price-item"

# Generic: "[data-testid='price'], .product-price, .price"

price_elem = soup.select_one(

'[data-testid="price"], .price, .a-price .a-offscreen'

)

if price_elem:

price_text = price_elem.get_text(strip=True)

return {"url": url, "price": price_text, "status": "success"}

else:

return {"url": url, "price": None, "status": "selector_miss"}

elif response.status_code == 403:

# A 403 may indicate the site does not permit automated access.

# Before retrying, confirm that robots.txt and TOS allow scraping

# this page. If access is legitimately permitted, a 403 can also

# result from incomplete headers or temporary IP-level rate limits.

time.sleep(random.uniform(2, 5))

continue

elif response.status_code == 429:

time.sleep(random.uniform(10, 20))

continue

except requests.exceptions.Timeout:

time.sleep(random.uniform(3, 7))

continue

except requests.exceptions.ConnectionError:

time.sleep(random.uniform(5, 10))

continue

return {"url": url, "price": None, "status": "failed_after_retries"}Two points worth noting: the random delay between retries prevents your script from flooding the server with rapid consecutive requests, which respects the target's infrastructure and aligns with responsible scraping practices. The User-Agent header should accurately identify the client making the request—sending a valid, current browser string is standard HTTP protocol and helps site operators understand their traffic.

Step 4 — Run Multi-Location Price Collection

Combine geo-targeting with the scraping function to collect location-specific prices in a single run:

import pandas as pd

from datetime import datetime

product_urls = [

# Replace with your actual target product URLs

"https://target-store.com/product/wireless-headphones",

"https://target-store.com/product/laptop-stand",

]

results = []

for url in product_urls:

for loc in locations:

proxies = get_geo_proxy(country=loc["country"], city=loc.get("city"))

result = scrape_price(url, proxies)

result["country"] = loc["country"]

result["city"] = loc.get("city", "any")

result["timestamp"] = datetime.utcnow().isoformat()

results.append(result)

# Respectful delay between requests

time.sleep(random.uniform(3, 8))

# Save to CSV for analysis

df = pd.DataFrame(results)

df.to_csv(f"prices_{datetime.now().strftime('%Y%m%d_%H%M')}.csv", index=False)

print(df.to_string(index=False))This produces a timestamped CSV with one row per product-location combination. Run this on a schedule (e.g., via cron or a task scheduler), and you'll accumulate the historical dataset needed for the comparison step below.

Step 5 — Analyze Price Differences Across Locations

Once you have CSV files from one or more collection runs, use the following to turn raw prices into comparative insights:

import pandas as pd

import re

# Load one or more collection runs

df = pd.read_csv("prices_20260212_0900.csv")

# Clean price strings into numeric values

def parse_price(price_str):

"""Extract numeric value from price strings like '$49.99', '€54,99', '¥5,980'."""

if not price_str or price_str == "None":

return None

numbers = re.findall(r'[\d.,]+', str(price_str))

if numbers:

# Handle European comma-as-decimal format

clean = numbers[0].replace(',', '.')

try:

return float(clean)

except ValueError:

return None

return None

df["price_numeric"] = df["price"].apply(parse_price)

# --- Comparison 1: Geographic price spread per product ---

geo_spread = df.groupby("url")["price_numeric"].agg(["min", "max", "mean"])

geo_spread["spread_pct"] = ((geo_spread["max"] - geo_spread["min"]) / geo_spread["min"] * 100).round(1)

print("=== Geographic Price Spread ===")

print(geo_spread.to_string())

# A spread_pct above 10-15% signals significant geo-pricing worth monitoring closely.

# --- Comparison 2: Cheapest location per product ---

cheapest = df.loc[df.groupby("url")["price_numeric"].idxmin()]

print("\n=== Cheapest Location Per Product ===")

print(cheapest[["url", "country", "city", "price"]].to_string(index=False))

# --- Comparison 3: Day-over-day change detection (requires multiple runs) ---

# Load yesterday's and today's data

# df_yesterday = pd.read_csv("prices_20260211_0900.csv")

# df_today = pd.read_csv("prices_20260212_0900.csv")

# merged = df_today.merge(df_yesterday, on=["url", "country"], suffixes=("_today", "_yesterday"))

# merged["change_pct"] = ((merged["price_numeric_today"] - merged["price_numeric_yesterday"])

# / merged["price_numeric_yesterday"] * 100).round(1)

# alerts = merged[merged["change_pct"].abs() > 5] # Flag changes over 5%

# print("\n=== Price Change Alerts (>5%) ===")

# print(alerts[["url", "country", "change_pct"]].to_string(index=False))What each comparison tells you:

Geographic spread reveals which products have the largest regional price gaps—these are the candidates where geo-targeted sourcing or regional advertising would have the biggest margin impact.

Cheapest location identifies where competitors offer the lowest price, which can inform your own geo-pricing strategy or highlight markets where a competitor is running promotions.

Day-over-day change detection (uncomment when you have multiple runs) flags sudden price movements. A 10%+ drop from a competitor often means a flash sale or inventory clearance; a sudden spike could indicate a supply shortage.

Step 6 — Verify Your Setup Is Working Correctly

Before relying on the data, confirm three things:

IP diversity: Run 10 consecutive requests through your proxy to

https://httpbin.org/ipand verify you receive different IP addresses each time. If you see the same IP repeated, check whether you need to enable per-request rotation in your provider's dashboard.Geo-accuracy: Use

https://ipinfo.io/jsonthrough a geo-targeted proxy and confirm the returned location matches your target country/city. If the geolocation is off, try a broader target (country instead of city)—city-level availability varies by region.Price variance: Compare the prices your scraper collects against what you see when manually visiting the same page from a browser with a VPN set to the same location. If prices are consistently identical regardless of proxy location, the site may not use geo-targeted pricing for that product—or it may require JavaScript rendering to load dynamic prices (see Troubleshooting below).

Troubleshooting Common Failures

All requests return 403 Forbidden.The target site may be returning errors because the request is missing standard browser headers. Ensure you include Accept, Accept-Language, and Accept-Encoding headers—incomplete HTTP requests are often rejected by web servers regardless of IP source. Also verify that your proxy is residential, not datacenter, since some sites restrict access from hosting IP ranges. If the page relies on JavaScript to render pricing content, a simple HTTP request won't load the data—use a headless browser like Playwright or Selenium, which fully render the page before you parse it.

Prices are identical across all locations.Either the site doesn't use geo-pricing for that product, or the price is rendered client-side via JavaScript and your HTTP-only scraper is not loading it. First confirm geo-targeting is working (check exit IP location via ipinfo.io). If IPs are correctly located but prices still match across regions, switch to a headless browser approach as described in the 403 fix above—the same JavaScript rendering issue applies here.

Script stops collecting data after a few hours.Session tokens expired, bandwidth quota reached, or the site's request-handling configuration changed. Check your provider's dashboard for usage stats and remaining bandwidth. Ensure rotation is per-request (not sticky session) for monitoring tasks. If errors increase over time, reduce your request frequency—spacing requests 10–30 seconds apart with some variance is a responsible collection rate that minimizes server load and keeps your pipeline sustainable long-term.

selector_miss status on most results.The site's HTML structure changed, or it serves a different layout to suspected bots. Revisit the page in your browser, re-inspect the price element, and update your CSS selector. For sites that frequently change layouts, use multiple fallback selectors (as shown in the code) and add logging so you're alerted when all selectors fail.

Compliance and Ethical Boundaries

Price monitoring via web scraping is legally permissible in many contexts, but boundaries matter. The hiQ vs. LinkedIn case (2022) established that scraping publicly accessible data does not automatically violate the CFAA. However, the Meta vs. Bright Data case (2024) reinforced that Terms of Service restrictions can create enforceable contractual obligations.

Respect these principles to stay on solid ground:

Follow robots.txt directives. Courts and regulators—especially under GDPR and the EU Digital Services Act—increasingly treat robots.txt as a compliance signal. Ignoring it undermines any good-faith defense.

Limit scope to public pricing data. Do not scrape behind login walls, collect personally identifiable information, or access content explicitly restricted by the site's terms.

Minimize server impact. Space out requests, implement exponential backoff on errors, and avoid parallel request floods. Keep your request rate comparable to what a single user would generate during normal browsing.

Watch for PII. Price monitoring typically involves product data, but review your pipeline to ensure no personal information (user reviews with names, seller contact info) leaks into your dataset—especially when scraping EU-based sites where GDPR applies.

Document your methodology. Maintain records of what you collect, how often, and for what purpose. If challenged, this documentation demonstrates legitimate commercial use.

Scale Your Price Intelligence with Proxy001

If you're building a price monitoring pipeline that needs reliable residential IPs with broad geographic coverage, Proxy001 is worth evaluating. The platform offers access to over 100 million residential IPs across 200+ countries, with both automatic rotation and sticky sessions (up to 60 minutes), full HTTP/HTTPS/SOCKS5 protocol support, and unlimited concurrent connections—starting at $0.70/GB. Proxy001 provides integration examples for Python, Node.js, Puppeteer, and Selenium, so you can connect it to the scraping workflow above without rewriting your code. A free trial is available to validate geo-targeting accuracy and rotation behavior on your specific target sites before committing. Get started at proxy001.com.

English

English

繁體中文

繁體中文

Deutsch

Deutsch

Français

Français

Español

Español

日本語

日本語

Tiếng Việt

Tiếng Việt

Português

Português