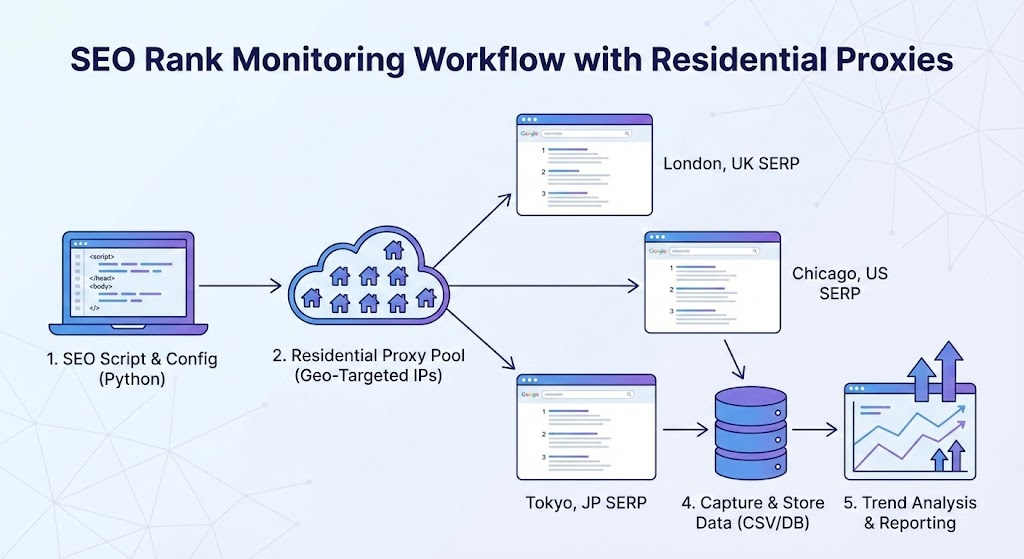

Accurate ranking data is the foundation of any workable SEO strategy. Yet the search results you see on your own screen are rarely what your target audience sees. Google tailors SERPs according to the searcher's IP address, physical location, device type, language settings, and browsing history—and the gap between "your" SERP and "theirs" is wider than most teams realize.

A Go Fish Digital geo-personalization study published in October 2025 analyzed a single keyword across all 50 U.S. states and their largest cities. Switching from a state-level to a city-level location signal caused ranking positions to shift in 19 states, and in 24 cities the tracked publisher vanished from page one entirely—despite strong state-level performance. SerpApi's technical research from December 2025 reached a similar conclusion: even the difference of a single city can alter 50–60% of visible SERP results.

For SEO professionals managing multi-market campaigns, this means you cannot evaluate real search visibility from a single vantage point. Residential proxies solve this by letting you query Google through genuine household IPs in your target locations.

Why Datacenter IPs and Manual Checks Fall Short

Manual spot-checking from your own browser gives you one data point from one location, filtered through your search history and account signals. Even incognito mode only strips cookies—it does not mask your IP or prevent Google from inferring regional context.

Datacenter proxies are fast and cheap, but their IP ranges are easily identified through ASN lookups. Google's January 2025 anti-scraping update tightened enforcement against automated queries with more frequent CAPTCHA challenges, enhanced IP-based rate limiting, and behavioral analysis targeting non-human traffic. Several major SEO platforms, including SEMrush and SimilarWeb, experienced significant disruptions during this update because parts of their infrastructure relied on datacenter-based scraping.

Residential proxies route requests through IPs assigned by real ISPs (Comcast, Vodafone, BT, etc.) to actual households. Google treats this traffic as ordinary consumer browsing—lower CAPTCHA rates, accurate local pack and featured snippet rendering, and SERP data that reflects what real users see.

Step 1: Choose the Right Proxy Type

Rotating residential proxies assign a different IP per request. Use these for daily keyword sweeps across multiple locations—5,000+ keywords at scale. Each query looks like a different consumer, keeping per-IP request counts far below rate-limiting thresholds.

Static residential (ISP) proxies maintain the same IP for weeks. Use these for session-persistent tasks: monitoring a specific local pack daily, tracking personalized result changes, or running multi-step audits where IP consistency matters.

Providers like Proxy001 offer both types under a single account with city-level geo-targeting, which simplifies management when you need both approaches.

Step 2: Verify Geographic Accuracy Before Collecting Any Data

This is the step most teams skip, and it's where bad data originates. A proxy advertised as "Chicago" that geolocates to Dallas gives you Dallas SERPs. Always verify before trusting.

# Prerequisites: curl installed, proxy credentials from your provider curl -x http://USERNAME:PASSWORD@proxy-gateway.example.com:PORT \ https://ipinfo.io/json # Check in the response: # - "city" matches your target (e.g., "Chicago") # - "org" shows a consumer ISP (Comcast, AT&T), NOT a hosting company # (if you see "Amazon.com" or "DigitalOcean", it's a datacenter IP)

For batch verification across multiple IPs, use this script:

"""

Proxy Geo-Accuracy Verifier

Prerequisites: Python 3.8+, requests (pip install requests)

Purpose: Confirm proxy IPs resolve to the intended target city

"""

import requests, time, random

PROXY_GATEWAY = "http://USER:PASS@gateway.example.com:PORT"

TARGET_CITY = "Chicago"

NUM_TESTS = 10

proxies = {"http": PROXY_GATEWAY, "https": PROXY_GATEWAY}

match_count = 0

for i in range(NUM_TESTS):

try:

resp = requests.get("https://ipinfo.io/json", proxies=proxies, timeout=15)

data = resp.json()

city = data.get("city", "Unknown")

org = data.get("org", "Unknown")

matched = TARGET_CITY.lower() in city.lower()

match_count += matched

print(f"Test {i+1}: {'MATCH' if matched else 'MISMATCH'} | "

f"IP: {data.get('ip')} | City: {city} | ISP: {org}")

except Exception as e:

print(f"Test {i+1}: ERROR — {e}")

time.sleep(random.uniform(2, 5))

accuracy = match_count / NUM_TESTS * 100

print(f"\nGeo-accuracy: {accuracy:.0f}% ({match_count}/{NUM_TESTS})")

if accuracy < 80:

print("Below 80% — try a different provider or finer geo-targeting.")Expected output:

Test 1: MATCH | IP: 73.xxx.xxx.xxx | City: Chicago | ISP: AS7922 Comcast Cable Communications, LLC Test 2: MATCH | IP: 24.xxx.xxx.xxx | City: Chicago | ISP: AS7018 AT&T Services, Inc. Test 3: MISMATCH | IP: 68.xxx.xxx.xxx | City: Naperville | ISP: AS7922 Comcast Cable Communications, LLC ... Geo-accuracy: 80% (8/10)

A nearby suburb appearing occasionally is normal. Results from entirely different states indicate a problem—switch providers or refine your geo-targeting parameters before proceeding.

Step 3: Collect and Store Ranking Data

The goal isn't just checking a position once—it's building a historical dataset you can analyze for trends.

Approach A: Plug Proxies into Your Existing Rank Tracker

Most tools (AccuRanker, SE Ranking, SERPWatcher, Wincher) support custom proxy configuration:

Navigate to Settings → Proxy (or API → Custom Proxy).

Enter your endpoint:

http://username:password@gateway:port.Align locations. If the tool uses Google's

uuleparameter for city targeting, your proxy IP should match the same city. A mismatch between IP geolocation anduulecan cause Google to serve inconsistent results.Test with 10–20 keywords; compare against a manual check from the same geography before running your full schedule.

The tool handles storage, scheduling, and trend visualization, so you skip Steps 4 and 5 below.

Approach B: Build a Custom Multi-Location Monitoring Script

For full control—especially if you need custom SERP feature extraction or integration with internal dashboards—build your own. This script tracks multiple keywords across multiple locations and saves results to a timestamped CSV that accumulates over daily runs:

"""

Multi-Location SERP Rank Monitor

Prerequisites: Python 3.8+, requests, beautifulsoup4

Install: pip install requests beautifulsoup4

Outputs: CSV file at data/serp_rankings.csv with columns:

date, keyword, location, gl, position, url, title, status

Schedule this to run daily (see Step 4) to build historical data.

Compliance: For monitoring your own site and legitimate competitive

research. Respect robots.txt and use responsible request pacing.

"""

import requests

from bs4 import BeautifulSoup

import csv, os, time, random

from datetime import date

from urllib.parse import quote_plus

# ─── CONFIGURATION ───────────────────────────────────────────────

TARGET_DOMAIN = "yourdomain.com" # domain you want to track

OUTPUT_DIR = "data"

OUTPUT_FILE = os.path.join(OUTPUT_DIR, "serp_rankings.csv")

# Define keywords and target locations

# Each location needs: a label, Google gl code, and proxy gateway

# pointed at that city

TRACKING_CONFIG = [

{

"location": "Chicago, US",

"gl": "us",

"proxy": "http://USER:PASS@gateway.example.com:PORT",

"keywords": [

"residential proxy for seo",

"best rank tracking tool",

"local serp monitoring",

],

},

{

"location": "London, UK",

"gl": "uk",

"proxy": "http://USER:PASS@gateway-uk.example.com:PORT",

"keywords": [

"residential proxy for seo",

"best rank tracking tool",

"local serp monitoring",

],

},

]

# ─── SERP CHECKER ────────────────────────────────────────────────

def check_position(keyword, proxy_url, target_domain, gl="us", hl="en"):

proxies = {"http": proxy_url, "https": proxy_url}

params = f"q={quote_plus(keyword)}&gl={gl}&hl={hl}&num=20&pws=0"

url = f"https://www.google.com/search?{params}"

headers = {

"User-Agent": (

"Mozilla/5.0 (Windows NT 10.0; Win64; x64) "

"AppleWebKit/537.36 (KHTML, like Gecko) "

"Chrome/131.0.0.0 Safari/537.36"

),

"Accept": "text/html,application/xhtml+xml,*/*;q=0.8",

"Accept-Language": f"{hl};q=0.9",

}

try:

resp = requests.get(url, headers=headers, proxies=proxies, timeout=20)

if resp.status_code == 429:

return {"position": None, "status": "rate_limited"}

if "unusual traffic" in resp.text.lower():

return {"position": None, "status": "captcha"}

soup = BeautifulSoup(resp.text, "html.parser")

for idx, div in enumerate(soup.select("div.tF2Cxc, div.g"), start=1):

link = div.select_one("a[href]")

title = div.select_one("h3")

if link and title:

href = link.get("href", "")

if target_domain.lower() in href.lower():

return {

"position": idx,

"url": href,

"title": title.get_text(strip=True),

"status": "found",

}

return {"position": ">20", "status": "not_in_top_20"}

except Exception as e:

return {"position": None, "status": f"error: {e}"}

# ─── MAIN: RUN TRACKING AND APPEND TO CSV ────────────────────────

def run_daily_check():

os.makedirs(OUTPUT_DIR, exist_ok=True)

file_exists = os.path.isfile(OUTPUT_FILE)

today = date.today().isoformat()

with open(OUTPUT_FILE, "a", newline="", encoding="utf-8") as f:

writer = csv.writer(f)

if not file_exists:

writer.writerow([

"date", "keyword", "location", "gl",

"position", "url", "title", "status"

])

for config in TRACKING_CONFIG:

loc = config["location"]

gl = config["gl"]

proxy = config["proxy"]

print(f"\n--- Checking {loc} ---")

for kw in config["keywords"]:

result = check_position(kw, proxy, TARGET_DOMAIN, gl=gl)

row = [

today, kw, loc, gl,

result.get("position", ""),

result.get("url", ""),

result.get("title", ""),

result.get("status", ""),

]

writer.writerow(row)

pos = result.get("position", "err")

print(f" [{pos}] {kw} — {result.get('status')}")

# 15-25s delay per query — respectful pacing

time.sleep(random.uniform(15, 25))

print(f"\nResults appended to {OUTPUT_FILE}")

if __name__ == "__main__":

run_daily_check()What this produces: A CSV that grows with each daily run, structured like this:

date,keyword,location,gl,position,url,title,status 2026-02-11,residential proxy for seo,Chicago US,us,7,https://yourdomain.com/...,Your Page Title,found 2026-02-11,residential proxy for seo,London UK,uk,12,https://yourdomain.com/...,Your Page Title,found 2026-02-11,best rank tracking tool,Chicago US,us,>20,,,not_in_top_20 2026-02-12,residential proxy for seo,Chicago US,us,5,https://yourdomain.com/...,Your Page Title,found ...

Step 4: Automate Daily Runs

Schedule the script to run automatically so data accumulates without manual intervention.

Linux/macOS (cron):

# Open crontab editor crontab -e # Add this line to run daily at 6:00 AM server time: 0 6 * * * cd /path/to/your/project && /usr/bin/python3 serp_monitor.py >> logs/serp.log 2>&1

Windows (Task Scheduler):Create a basic task that runs python serp_monitor.py daily at your preferred time, with the working directory set to your project folder.

Tip: Run your check at a consistent time each day. SERP positions fluctuate throughout the day, so same-time comparisons produce cleaner trend data.

Step 5: Analyze Trends and Spot Problems

This script reads your accumulated CSV and generates a weekly comparison report—highlighting which keywords improved, declined, or dropped out of the top 20:

"""

Weekly Rank Comparison Report

Prerequisites: Python 3.8+ (uses only standard library + csv)

Reads: data/serp_rankings.csv (produced by the monitoring script)

Output: Prints a summary comparing this week vs. last week

"""

import csv

from datetime import date, timedelta

from collections import defaultdict

INPUT_FILE = "data/serp_rankings.csv"

def load_rankings():

"""Load CSV into a dict keyed by (date, keyword, location)."""

rankings = {}

with open(INPUT_FILE, "r", encoding="utf-8") as f:

reader = csv.DictReader(f)

for row in reader:

key = (row["date"], row["keyword"], row["location"])

pos = row["position"]

# Normalize: numeric positions as int, ">20" as 21, errors as None

try:

rankings[key] = int(pos)

except (ValueError, TypeError):

rankings[key] = 21 if pos == ">20" else None

return rankings

def get_latest_position(rankings, keyword, location, before_date, lookback_days=7):

"""Find the most recent recorded position within the lookback window."""

for i in range(lookback_days):

d = (before_date - timedelta(days=i)).isoformat()

key = (d, keyword, location)

if key in rankings and rankings[key] is not None:

return rankings[key], d

return None, None

def generate_report():

rankings = load_rankings()

# Collect all unique (keyword, location) pairs

pairs = set()

for (d, kw, loc) in rankings:

pairs.add((kw, loc))

today = date.today()

one_week_ago = today - timedelta(days=7)

print(f"=== Weekly Rank Change Report ({one_week_ago} → {today}) ===\n")

improved, declined, stable, lost, errors = [], [], [], [], []

for kw, loc in sorted(pairs):

current_pos, current_date = get_latest_position(rankings, kw, loc, today)

previous_pos, prev_date = get_latest_position(rankings, kw, loc, one_week_ago)

if current_pos is None:

errors.append((kw, loc, "no recent data"))

continue

if previous_pos is None:

errors.append((kw, loc, "no baseline data"))

continue

change = previous_pos - current_pos # positive = improved

entry = {

"keyword": kw,

"location": loc,

"current": current_pos if current_pos <= 20 else ">20",

"previous": previous_pos if previous_pos <= 20 else ">20",

"change": change,

}

if current_pos > 20 and previous_pos <= 20:

lost.append(entry)

elif change > 0:

improved.append(entry)

elif change < 0:

declined.append(entry)

else:

stable.append(entry)

# Print results grouped by status

if lost:

print("DROPPED OUT OF TOP 20:")

for e in lost:

print(f" {e['keyword']} [{e['location']}]: "

f"was #{e['previous']} → now {e['current']}")

if declined:

print("\nDECLINED:")

for e in sorted(declined, key=lambda x: x["change"]):

print(f" {e['keyword']} [{e['location']}]: "

f"#{e['previous']} → #{e['current']} ({e['change']:+d})")

if improved:

print("\nIMPROVED:")

for e in sorted(improved, key=lambda x: -x["change"]):

print(f" {e['keyword']} [{e['location']}]: "

f"#{e['previous']} → #{e['current']} ({e['change']:+d})")

if stable:

print(f"\nSTABLE: {len(stable)} keywords unchanged")

if errors:

print(f"\nDATA GAPS: {len(errors)} keywords missing data")

for kw, loc, reason in errors:

print(f" {kw} [{loc}]: {reason}")

print(f"\nSummary: {len(improved)} improved | {len(declined)} declined | "

f"{len(lost)} dropped out | {len(stable)} stable | {len(errors)} gaps")

if __name__ == "__main__":

generate_report()Example output after two weeks of data:

=== Weekly Rank Change Report (2026-02-04 → 2026-02-11) === DROPPED OUT OF TOP 20: best rank tracking tool [London, UK]: was #18 → now >20 DECLINED: local serp monitoring [Chicago, US]: #5 → #8 (-3) IMPROVED: residential proxy for seo [Chicago, US]: #9 → #5 (+4) residential proxy for seo [London, UK]: #14 → #11 (+3) STABLE: 1 keywords unchanged Summary: 2 improved | 1 declined | 1 dropped out | 1 stable | 0 gaps

What to do with this output:

"Dropped out" keywords need immediate investigation—check if the page is still indexed, if a competitor launched new content, or if Google changed SERP features (e.g., a new AI Overview pushing organic results down).

Declining keywords (especially drops of 3+ positions) signal content that may need updating, additional internal links, or freshness signals.

Troubleshooting Reference

CAPTCHA or "unusual traffic" responses. Increase delay to 20–30 seconds between queries. Verify your proxy is actually rotating IPs by running the geo-check from Step 2 on consecutive requests. If CAPTCHAs persist at low rates, the IP pool may have high abuse scores—try a different provider or request a fresher pool segment.

Rankings don't match manual checks. Re-run geo-verification. If the IP location is correct, check for conflicting uule parameters and ensure your manual comparison uses incognito with location services fully disabled.

Empty or partial results. Inspect raw HTML. EU-based IPs often trigger consent screens—add consent cookies or append &consent=1. If the page requires JavaScript rendering, switch from plain requests to a headless browser (Playwright or Selenium).

Inconsistent results across runs. Normal daily SERP fluctuation exists. The weekly report script uses median-like logic (most recent available position) to smooth this out. If variance exceeds ±3 positions consistently for the same keyword, tighten geo-targeting or switch to a static proxy for that market.

Connection timeouts. Implement retry logic with exponential backoff. Set a 20-second hard timeout per request. If timeouts exceed 10% of total requests, your provider's infrastructure in that region is likely insufficient.

Bandwidth Planning

A typical Google SERP page runs 200–500 KB. For planning:

1,000 keywords/day ≈ 0.5–1 GB (including retries)

10,000 keywords/day ≈ 3–7 GB

50,000 keywords/day ≈ 15–35 GB

Budget 1.5× baseline to cover retries, consent redirects, and heavier SERP pages with AI Overviews.

Compliance

Using residential proxies for SEO monitoring is an established industry practice—Ahrefs, SEMrush, and Moz all rely on proxy infrastructure for SERP data collection. Responsible use means respecting robots.txt, maintaining reasonable per-IP request rates, using data for legitimate purposes (market research, competitive analysis, ad verification, your own site's performance monitoring), and choosing providers that source IPs through opt-in consent with GDPR/CCPA compliance.

Ready to Start Tracking?

If you need a residential proxy provider built for SEO monitoring, Proxy001 offers over 100 million residential IPs across 200+ countries with city-level geo-targeting, both rotating and static session options, and HTTP/SOCKS5 protocol support. Response times average under 0.3 seconds with 99.9% uptime, and pay-as-you-go pricing starts at $0.7/GB—practical for teams running anything from weekly local audits to daily multi-market keyword tracking at scale. Visit proxy001.com to explore plans or start a trial.

English

English

繁體中文

繁體中文

Deutsch

Deutsch

Français

Français

Español

Español

日本語

日本語

Tiếng Việt

Tiếng Việt

Português

Português