You paid for premium rotating residential proxies. You configured rotation. You launched your scraper. And within two or three requests, you're staring at a 403, a CAPTCHA wall, or a connection reset.

We ran into this exact scenario last month while benchmarking proxy providers for an e-commerce price monitoring project. Python requests through a top-tier residential proxy pool—403 on the second request, every single time. Same IP configured in a regular Chrome browser? Page loaded fine. The proxy wasn't the problem. Our HTTP client's TLS fingerprint was.

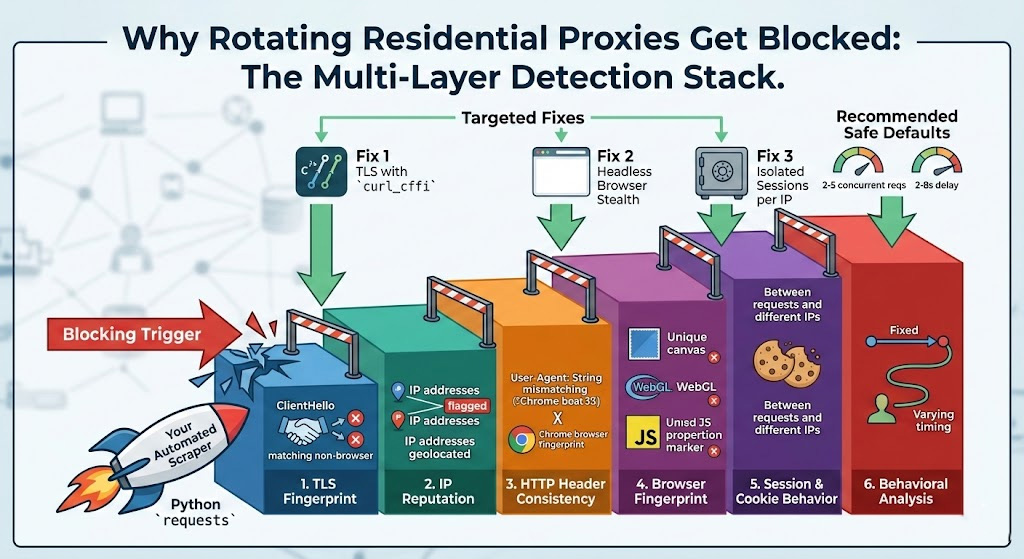

That experience captures the core issue most proxy users miss: modern anti-bot systems don't just check whether your IP is residential. They analyze your TLS handshake, your browser fingerprint, your cookie behavior, and your request cadence. Rotating your IP fixes exactly one of those layers. If the other layers scream "automation," a fresh IP won't save you.

Below: the six specific causes, a diagnostic table to match your symptoms to the root cause, and targeted fixes for each.

Modern Anti-Bot Detection Goes Far Beyond IP Addresses

Anti-bot systems in 2025-2026 operate as multi-layered detection stacks. Each layer evaluates a different signal independently, and failing any single layer can trigger a block—even if every other layer looks clean.

Here's what a typical detection stack evaluates, roughly in order of when it fires:

TLS fingerprint — Before your first byte of HTML arrives. The server inspects your TLS ClientHello to determine whether you're a real browser or an HTTP library.

IP reputation — Your IP's history, ASN classification, subnet abuse patterns, and geographic consistency.

HTTP header consistency — Whether your headers (User-Agent, Accept-Language, Accept-Encoding, Sec-CH-UA) match what a real browser with that TLS fingerprint would send.

Browser fingerprint — Canvas rendering, WebGL renderer strings, installed fonts, screen resolution, timezone, and dozens of other JavaScript-accessible properties.

Session and cookie behavior — Whether cookies persist appropriately within a session and reset appropriately across sessions.

Behavioral analysis — Request timing, navigation patterns, mouse movement, scroll behavior, and whether you follow a natural browsing path.

These layers are independent. Rotating your IP only addresses layer 2. If layer 1 (TLS) already identified you as a Python script during the handshake, a new residential IP changes nothing—the next request gets flagged just as fast.

6 Reasons Your Rotating Proxies Get Blocked Instantly

1. Your TLS Fingerprint Exposes You Before the First Page Loads

This is the most common reason for getting blocked within 2-3 requests, and it's the one most proxy users never think about.

Every TLS connection starts with a ClientHello message. This message contains your client's supported cipher suites, TLS extensions, elliptic curves, and their ordering. Anti-bot systems hash these values into a fingerprint—known as a JA3 or JA4 fingerprint—and compare it against a database of known signatures.

The problem: Python's requests library, Go's net/http, and Node.js's axios all produce TLS fingerprints that look nothing like Chrome, Firefox, or Safari. According to Salesforce's original JA3 research, each client implementation generates a distinct, recognizable signature. When Cloudflare or Akamai sees a residential IP paired with a Python requests TLS fingerprint, the mismatch is obvious. You're claiming to be a home user browsing with Chrome, but your TLS handshake says you're a script.

We verified this directly: we pointed the same residential IP at a TLS fingerprint checker from both Python requests and curl_cffi with Chrome impersonation. The JA3 hashes were completely different—requests produced a signature that matched no known browser, while curl_cffi matched Chrome 124 exactly.

The newer JA4+ standard, developed by FoxIO, makes this even harder to avoid. JA4 normalizes extension ordering (defeating Chrome's TLS extension randomization introduced in 2023) and incorporates TCP and HTTP/2 context, producing a more stable and harder-to-spoof identifier.

Why this causes blocking in 2-3 requests: The TLS fingerprint is checked on every single connection. The first request might get through if the site has a permissive initial threshold, but by the second or third request from a "residential IP" with a non-browser TLS signature, the bot score crosses the blocking threshold.

2. Your Browser Fingerprint Stays the Same Across IPs

You rotate from IP-A (Dallas, TX) to IP-B (São Paulo, BR) to IP-C (London, UK). But all three requests carry the same User-Agent string, the same canvas hash, the same WebGL renderer, the same screen resolution, and the same timezone offset.

To the anti-bot system, this pattern is unmistakable: three "different users" from three continents sharing an identical device fingerprint. That doesn't happen with real users.

There's also the consistency problem. If your IP geolocates to London but your browser's Intl.DateTimeFormat().resolvedOptions().timeZone returns America/New_York and your Accept-Language header starts with en-US, the mismatch between network-level and application-level signals flags the request as proxied.

Headless browsers add another wrinkle. Unmodified Puppeteer and Playwright instances expose automation markers—navigator.webdriver set to true, missing plugin arrays, and Chrome DevTools Protocol artifacts—that anti-bot systems specifically scan for. An unmodified headless browser is nearly as detectable as a raw HTTP client, so solving the TLS layer alone won't help if your browser environment leaks automation markers at the JavaScript level.

3. Session Cross-Contamination: Your Cookies Follow You to New IPs

This is what experienced scraping engineers call the "silent killer" of proxy rotation.

You rotate to a fresh IP, but your HTTP client reuses the same cookie jar. The target site issued a tracking cookie on your first request. When your second request arrives from a completely different IP but carries the same cookie, the server instantly correlates them. Worse, some sites use that cookie to retroactively flag the original IP, poisoning it for future users of the same proxy pool.

Cross-contamination happens in several ways:

Shared cookie jars across requests that use different proxy IPs

Persistent localStorage or sessionStorage in headless browser instances that aren't properly isolated

Authentication tokens that travel with the session rather than being scoped to a specific IP

HTTP client connection pools that reuse TCP connections across proxy rotations

The fix sounds simple—isolate sessions per IP—but many scraping frameworks default to connection reuse for performance. If you haven't explicitly configured session isolation, you're almost certainly leaking identity across rotations.

4. Your Request Patterns Don't Look Human

Real users browse with irregular timing. They spend 15 seconds on one page, 3 seconds on the next, 45 seconds reading a long article. They follow links. They load images, CSS, and JavaScript. They scroll.

Automated scrapers typically do none of this. They fire requests at fixed intervals (or as fast as possible), skip all subresources, navigate directly to deep URLs without any referrer, and maintain perfectly consistent request timing.

Modern behavioral analysis engines detect these patterns reliably. Specific red flags include:

Uniform request intervals — A 2-second delay between every request is just as suspicious as no delay, because real humans don't operate on a metronome.

High concurrency from a single IP — More than 5-10 concurrent connections from one residential IP is abnormal for household traffic.

Missing referrer chains — Jumping directly to

/product/12345without first visiting the homepage or category page.No subresource loading — A real browser loads dozens of assets per page. A raw HTTP request loads zero.

Linear crawl patterns — Systematically iterating through paginated results (page=1, page=2, page=3...) in perfect sequence.

5. Your Proxy Pool Has Contaminated IPs

Not all rotating residential proxy pools are equal. The IP you just rotated to might already be flagged because:

A previous user abused it — Proxy IPs are shared resources. If someone else ran aggressive scraping, spamming, or credential stuffing through that IP last week, its reputation score is already damaged.

Subnet concentration — Your provider's pool might be heavily concentrated in a few /24 subnets. When a site sees dozens of requests from the same subnet within an hour, it blocks the entire range.

Small pool, high overlap — Budget providers with pools under a few million IPs will cycle you back to previously-used (and potentially flagged) IPs quickly.

Stale IPs — IPs that haven't been refreshed or verified recently may already appear on commercial IP reputation blocklists.

We ran a quick pool quality test on a budget provider last quarter: out of 50 random IPs, 32 returned a 403 when we visited the target site through a normal Chrome browser—no scraping, no automation, just a regular page load. That's a 64% contamination rate, and no amount of configuration tuning will fix an IP that's already flagged at the network level.

You can run the same test: take an IP from your pool, configure it as a manual proxy in a regular browser, and try accessing your target site. If it's blocked even with a real browser and natural browsing, the IP itself is the problem—not your configuration.

6. You're Using the Wrong Rotation Mode for Your Task

Rotating residential proxies typically offer two modes: per-request rotation (new IP for each request) and sticky sessions (same IP maintained for a set duration, commonly 1-30 minutes, with some providers supporting up to 180 minutes).

Using per-request rotation for stateful workflows is a common misconfiguration. If your task involves login → navigate → interact → extract, rotating the IP between each step makes you look like four different users trying to share one session. Most sites treat this as a session hijacking attempt and terminate the session immediately.

The reverse also causes problems. Using a long sticky session for high-volume stateless collection means you're hammering a single IP with hundreds of requests, which triggers rate limiting.

The rule of thumb: stateful operations (authentication, multi-page checkouts, paginated browsing within a session) need sticky sessions. Stateless collection (scraping independent product pages, checking prices across URLs) works better with per-request rotation and properly isolated sessions.

Quick Diagnosis: Match Your Symptoms to the Root Cause

Use the HTTP response your scraper receives to narrow down the cause:

| Symptom | Most Likely Cause | First Step |

|---|---|---|

| 403 on every IP, including brand-new ones | TLS fingerprint mismatch (your client doesn't look like a browser at the protocol level) | Switch to curl_cffi with impersonate="chrome" or use Playwright/Puppeteer — see Fix #1 below |

| 403 after 3-5 requests, initial ones succeed | Browser fingerprint correlation or cookie cross-contamination | Isolate sessions per IP; rotate fingerprint profile per session — see Fix #2 and #3 |

| 429 Too Many Requests | Rate limiting—you're sending too fast or with too much concurrency | Reduce concurrency to 2-3 per IP; add randomized delays; respect Retry-After headers — see Fix #4 |

| CAPTCHA loops across different IPs | Behavioral analysis or fingerprint detection triggering challenges | Reduce request speed; add realistic browsing patterns; verify stealth configuration — see Fix #2 and #4 |

| Homepage loads, but login/checkout fails | Session inconsistency—you're rotating IPs mid-workflow where sticky sessions are needed | Switch to sticky session mode for stateful operations — see Fix #6 |

| Some IPs work, others fail immediately | Contaminated IP pool—specific IPs are pre-flagged | Test IPs manually in a real browser; switch proxy provider or request a different pool segment — see Fix #5 |

| Everything fails on one target, works on others | Target site's specific anti-bot policy (e.g., aggressive Cloudflare configuration) | Use full browser automation; reduce concurrency significantly; verify your access complies with the site's ToS |

Key diagnostic principle: change only one variable at a time. If you swap your proxy provider, switch to Playwright, and add random delays all at once, you won't know which change fixed the problem—or which might cause the next one.

How to Fix Each Blocking Trigger

Fix #1: TLS Fingerprint Mismatch

You have two reliable paths:

Option A: Use a real browser engine. Playwright and Puppeteer launch actual Chromium instances, which naturally produce authentic TLS fingerprints because they are real browsers. This is the most reliable approach, though it consumes more memory and CPU than lightweight HTTP clients.

Option B: Use a TLS-impersonating HTTP library. For Python, curl_cffi is the go-to solution. It wraps curl-impersonate, which modifies the underlying TLS library to produce browser-matching fingerprints. The API mirrors Python's requests library, so migration is straightforward:

# Install: pip install curl_cffi

from curl_cffi import requests

proxy = "http://USERNAME:PASSWORD@proxy-host:port"

response = requests.get(

"https://target-site.com/page",

impersonate="chrome",

proxies={"http": proxy, "https": proxy},

)

print(response.status_code) # 200 instead of 403The impersonate="chrome" parameter configures curl_cffi to use the same TLS settings that a standard Chrome browser uses—cipher suites, extension ordering, and HTTP/2 configuration. Replace USERNAME:PASSWORD@proxy-host:port with your actual proxy credentials from your provider's dashboard.

Verify: Use the JA3 fingerprint checker at Scrapfly's web scraping tools page to confirm your JA3 hash matches a recognized Chrome signature.

Fix #2: Browser Fingerprint Consistency

For browser-based scraping, stealth plugins correct automation artifacts that cause headless browsers to be misclassified as bots. Minimal setup for Playwright with playwright-stealth:

# Install: pip install playwright playwright-stealth

# Then: playwright install chromium

from playwright.sync_api import sync_playwright

from playwright_stealth import stealth_sync

with sync_playwright() as p:

browser = p.chromium.launch(

proxy={"server": "http://proxy-host:port",

"username": "USERNAME", "password": "PASSWORD"}

)

context = browser.new_context(

locale="en-US",

timezone_id="America/New_York", # Match your proxy IP's geo

viewport={"width": 1920, "height": 1080}

)

page = context.new_page()

stealth_sync(page)

page.goto("https://target-site.com")When you switch to a new proxy IP, update the browser context to match: timezone, Accept-Language, and locale should all align with the IP's geolocation. A US IP should have en-US language and a US timezone—not Asia/Tokyo carried over from a previous session.

Fix #3: Isolate Sessions Per IP

Every IP rotation should start with a clean session state. In Playwright, browser.new_context() creates a fully isolated environment with its own cookie store, localStorage, cache, and network state. Calling context.close() wipes everything clean:

# Each proxy IP gets its own isolated context

for proxy_ip in proxy_list:

context = browser.new_context(

proxy={"server": proxy_ip,

"username": "USER", "password": "PASS"}

)

page = context.new_page()

stealth_sync(page)

page.goto("https://target-site.com/page")

data = page.content()

context.close() # Cookies, storage, cache all destroyedIf you're using raw HTTP clients like curl_cffi, instantiate a new Session() object per IP rather than reusing a persistent one, and ensure HTTP connection pooling doesn't reuse TCP connections across proxy switches.

Fix #4: Humanize Your Request Patterns

Replace fixed delays with randomized intervals:

Base delay between requests: 2-8 seconds, randomly sampled per request

Add jitter: ±30% randomization on top of the base delay

Concurrency per IP: Cap at 2-5 concurrent requests per exit IP

Exponential backoff on errors: Start at 2-3 seconds, double on each consecutive failure, cap at 30-60 seconds. Add random jitter (AWS's architecture blog recommends "full jitter") to prevent retry storms.

Request sequencing: Visit the homepage first, then a category page, then the target page. Load at least some subresources. Include realistic referrer headers.

Fix #5: Test and Switch Your Proxy Pool

Before blaming your configuration, verify whether the IPs themselves are clean:

Take 10-20 IPs from your pool and test them manually—configure each as a browser proxy and visit your target site normally. If more than 20-30% fail even with a real browser, the pool quality is the issue, not your setup.

Check geographic distribution. If most of your IPs fall into a small number of subnets, request a more diverse allocation from your provider.

Test at different times of day. Some proxy pools have higher contamination during peak usage hours.

When evaluating providers, key metrics are: pool size, daily IP refresh rate, success rate monitoring, and sticky session support. For context, Proxy001 maintains 100M+ residential IPs across 200+ regions with 100,000+ new IPs added daily, a 98.9% real-time success rate, and sticky sessions up to 180 minutes.

Fix #6: Match Rotation Mode to Your Task

Stateless data collection (independent URLs, price checks, SERP scraping): Use per-request rotation. Each request is self-contained, so a fresh IP per request maximizes coverage and minimizes rate-limit accumulation.

Stateful workflows (login flows, multi-step forms, paginated browsing within a session): Use sticky sessions. Set the session duration to cover your entire workflow with a safety margin—if your login → navigate → extract flow takes 3 minutes, set a 10-minute sticky session.

Hybrid tasks (log in with sticky session, then collect data with rotation): Use a sticky session for authentication, capture the auth cookies, then switch to per-request rotation for the data collection phase—each rotated request carries the auth cookies while everything else (fingerprint, non-auth cookies) resets.

Recommended Safe Defaults

| Parameter | Recommended Range | Why |

|---|---|---|

| Concurrency per exit IP | 2-5 | Matches realistic household browsing load |

| Base delay between requests | 2-8 seconds (randomized) | Falls within natural human browsing intervals |

| Jitter on delay | ±30% | Prevents detectable timing patterns |

| Backoff on 429/error | Exponential, 2s → 60s cap, with full jitter | Avoids retry storms; respects server signals |

| Sticky session duration | 10-30 minutes for typical workflows | Covers multi-step tasks with safety margin |

| Test batch size before scaling | 20-50 requests | Enough to detect pool quality issues without burning budget |

Start conservative and scale up gradually. It's far easier to increase concurrency after confirming your setup works than to recover from getting your entire proxy pool flagged on a target site.

Key Takeaways

IP rotation alone doesn't prevent blocking. Modern anti-bot systems check TLS fingerprints, browser fingerprints, session behavior, and request patterns independently. Failing any single layer triggers detection.

TLS fingerprint mismatch is the #1 cause of instant blocking. If you're using Python

requestsor similar HTTP libraries, your TLS handshake identifies you as a bot before any HTML loads. Switch tocurl_cffiwith browser impersonation or use a real browser engine.Diagnose before you fix. Use the HTTP status code and failure pattern to identify which detection layer you're failing, then apply the targeted fix. Changing everything at once wastes time and obscures what actually works.

Isolate everything per IP. Cookies, sessions, fingerprint profiles, and browser contexts should all reset when you rotate to a new IP. Cross-contamination is the most overlooked cause of proxy blocking.

Start conservative. 2-5 concurrent requests per IP, 2-8 second randomized delays, and exponential backoff on errors. Scale up only after verifying stability.

Need a residential proxy pool built for reliability at scale? Proxy001 offers 100M+ rotating residential IPs, a 98.9% success rate, sticky sessions up to 180 minutes, and SDKs for Python, Node.js, Puppeteer, and Selenium. Start your free proxy test →

English

English

繁體中文

繁體中文

Deutsch

Deutsch

Français

Français

Español

Español

日本語

日本語

한국어

한국어

Português

Português